Earlier this year, OpenAI released CLIP, and showed that scaling a simple image-caption alignment objective on large-scale internet data enabled impressive zero-shot transfer on a myriad of downstream tasks like classification, OCR and video action recognition.

Unfortunately, CLIP was largely overshadowed by its sibling DALL·E’s release, which I think is a tragedy given how seismic CLIP’s repercussions are going to be on the field. In fact, CLIP to me is going to be synonymous with the death of static class sets, which are pervasive in Computer Vision, when we look back in a few years. Ironically, you could even say DALL·E turned out to be an unintentional red herring, as CLIP has since been creatively combined with SOTA generative models to produce impressive images from text.

In this post, I want to highlight the versatility of CLIP by demonstrating its zero-shot capabilities on various tasks, such as reCAPTCHA solving and text-prompted detection. I’ve provided a Colab notebook with every example so you can feed in your own data and mess with CLIP to your heart’s desire. If you come up with other creative ways of using CLIP, feel free to submit a PR to the evergrowing playground on GitHub!

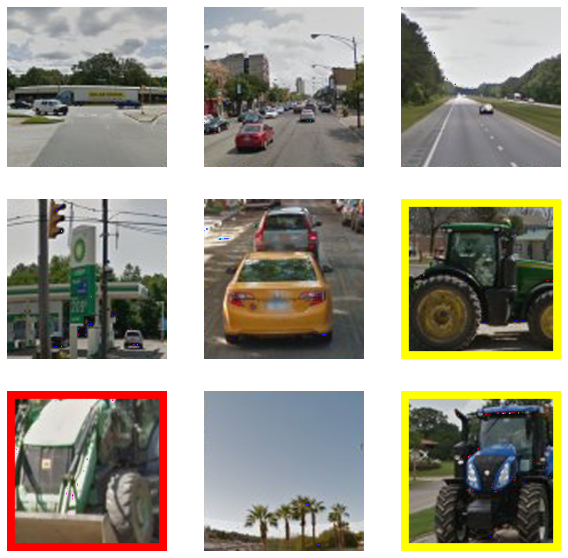

reCAPTCHA Solver

Crack “Select all images with _" CAPTCHAs by splitting the image into patches and selecting the ones with the highest patch-text similarity in CLIP embedding space.

Naive Zero-shot Detection

A crude detector that splits an image into patches and finds the highest patch-caption similarity in CLIP embedding space.

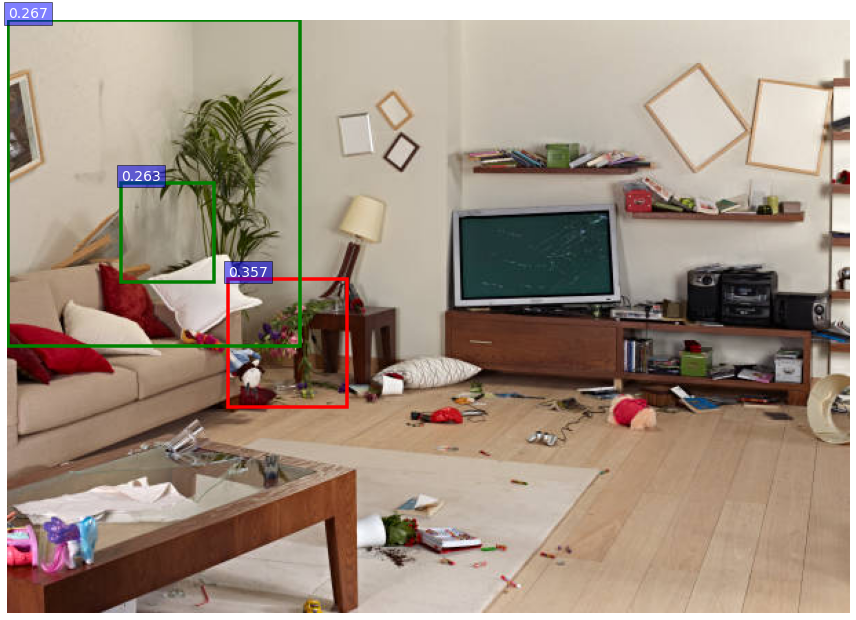

Smarter Zero-shot Detection

Automatically generate proposal regions with selective search, compute their similarity with a natural language query in CLIP embedding space, and return the top-k detections with non-maximum suppression.

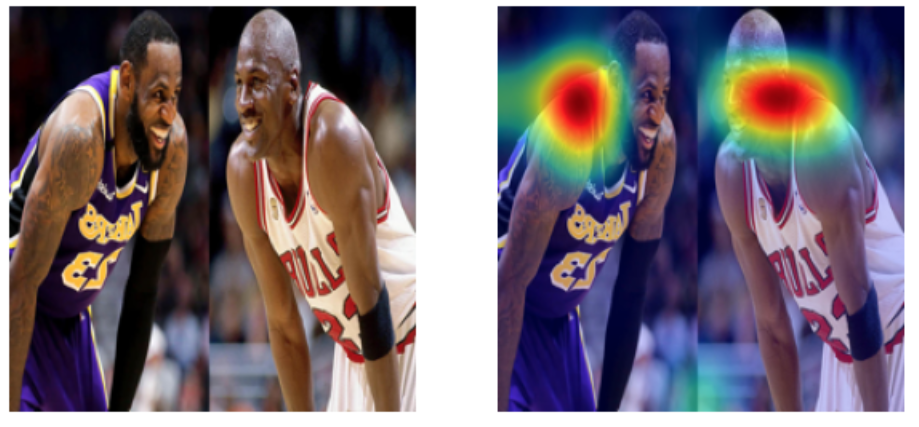

Text-Activated Saliency Maps

Visualize which parts of an image activate CLIP the most in response to a natural language query.

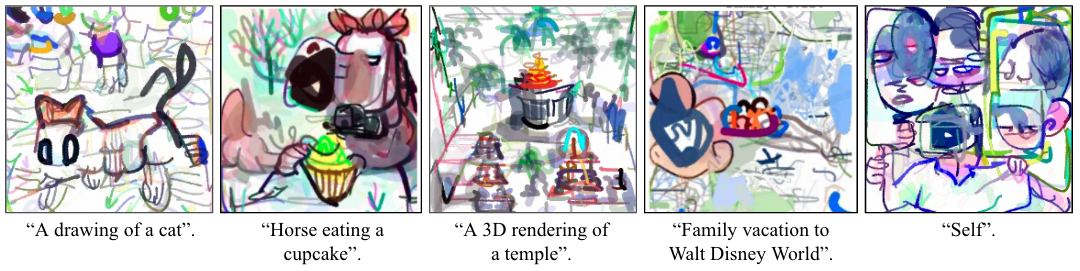

Prompt-conditioned Image Generation

CLIP has been creatively used to prompt-condition a bunch of generative models. Here are a few projects I’ve seen floating around Twitter: